Introduction: What is web scraping?

Overview

Teaching: 10 min

Exercises: 0 minQuestions

What is web scraping and why is it useful?

What are typical use cases for web scraping?

Objectives

Introduce the concept of structured data

Discuss how data can be extracted from webpages

Introduce the examples that will be used in this lesson

What is web scraping?

Web scraping is a technique for extracting information from websites. This can be done manually but it is usually faster, more efficient and less error-prone to automate the task.

Web scraping allows you to acquire non-tabular or poorly structured data from websites and convert it into a usable, structured format, such as a .csv file or spreadsheet.

Scraping is about more than just acquiring data: it can also help you archive data and track changes to data online.

It is closely related to the practice of web indexing, which is what search engines like Google do when mass-analysing the Web to build their indices. But contrary to web indexing, which typically parses the entire content of a web page to make it searchable, web scraping targets specific information on the pages visited.

For example, online stores will often scour the publicly available pages of their competitors, scrape item prices, and then use this information to adjust their own prices. Another common practice is “contact scraping” in which personal information like email addresses or phone numbers is collected for marketing purposes.

Web scraping is also increasingly being used by scholars to create data sets for text mining projects; these might be collections of journal articles or digitised texts. The practice of data journalism, in particular, relies on the ability of investigative journalists to harvest data that is not always presented or published in a form that allows analysis.

Before you get started

As useful as scraping is, there might be better options for the task. Choose the right (i.e. the easiest) tool for the job.

- Check whether or not you can easily copy and paste data from a site into Excel or Google Sheets. This might be quicker than scraping.

- Check if the site or service already provides an API to extract structured data. If it does, that will be a much more efficient and effective pathway. Good examples are the Facebook API, the Twitter APIs or the YouTube comments API.

- For much larger needs, Freedom of information Act (FOIA)requests can be useful (visit foia.gov). Be specific about the formats required for the data you want.

Example: Scraping UCSB department websites for faculty contact information

In this lesson, we will extract contact information from UCSB department faculty pages. This example came from a recent real-life scenario when your instructors for today needed to make lists of social sciences faculty for outreach reasons. There is no overarching list of faculty, contact information, and study area available for the university as a whole. This was made even more difficult by the fact that each UCSB department has webpages with wildly different formatting. Today we will see examples using the Chrome Scraper extension. Another technique is to use the Python programming language and a the Scrapy library, which is covered in a previous version of this workshop given here. There are different scenarios when one might be a better choice than the other.

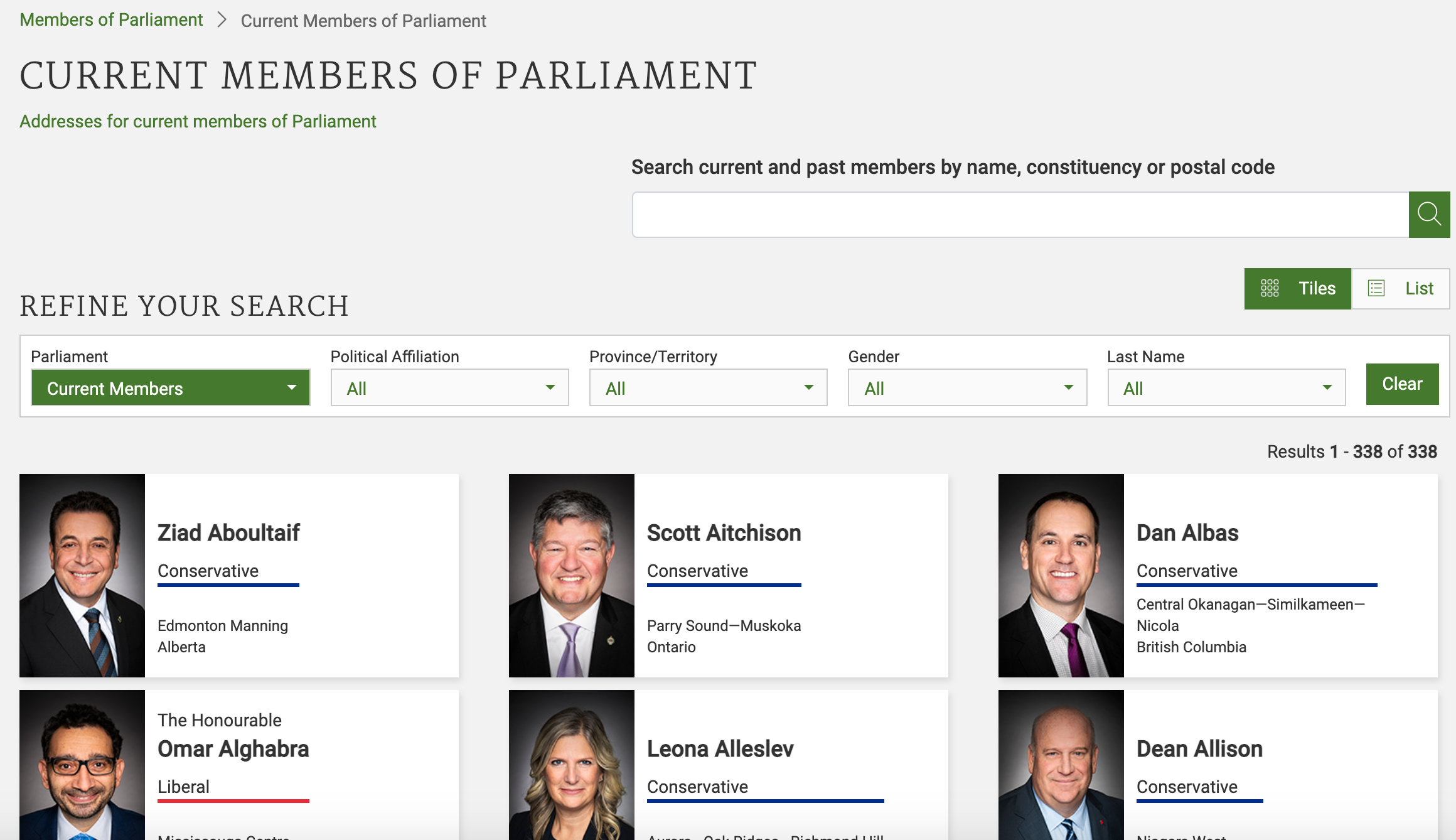

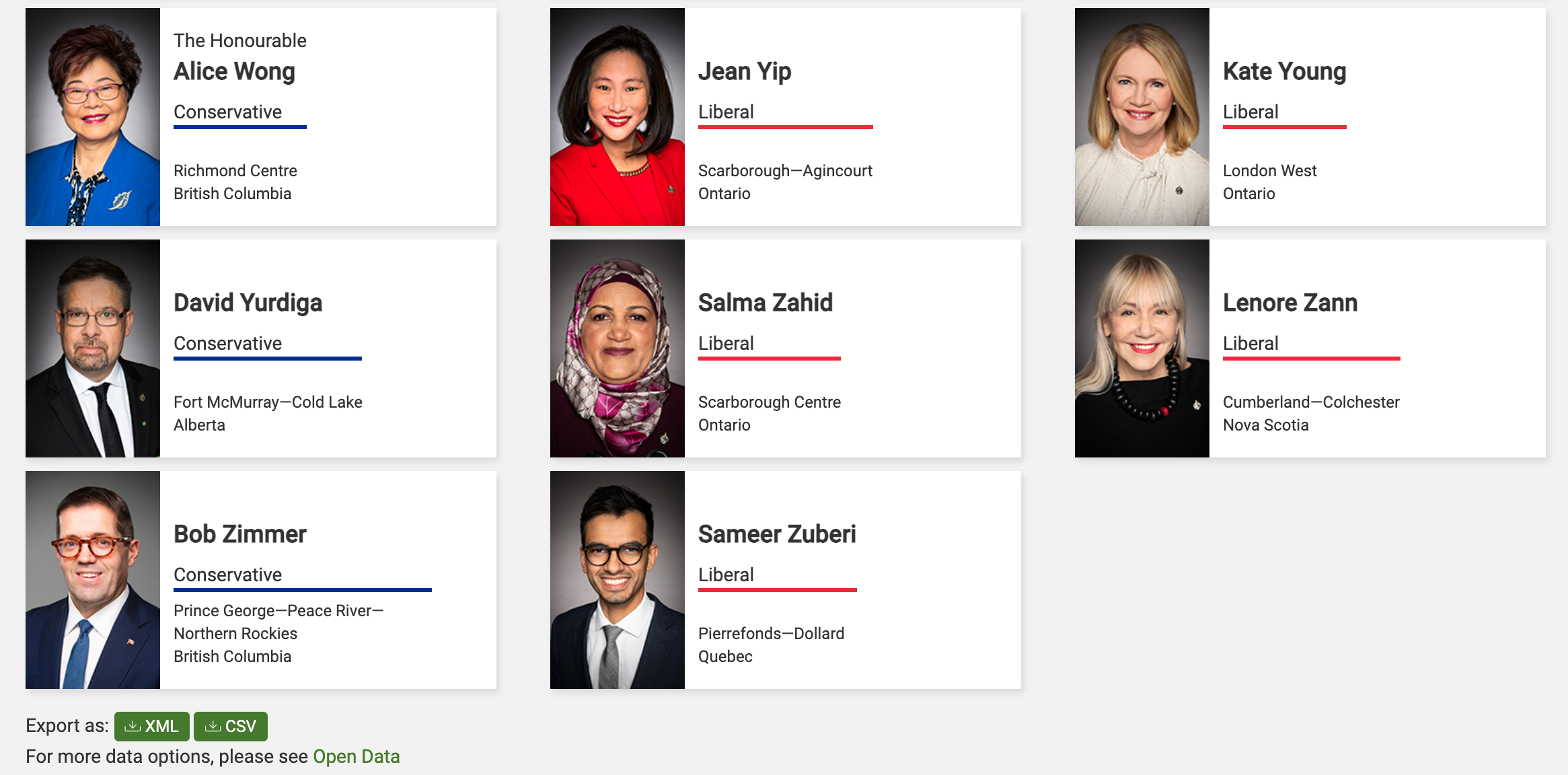

Let’s start by looking at how data on websites can be more or less structured. Let’s look first at the current list of members of the Canadian parliament, which is available on the Parliament of Canada website. This is how this page looks like as of February, 7 2021:

There are several features that make the data on this page easier to work with. The search, reorder, refine features and display modes hint that the data is actually stored in a (structured) database before being displayed on this page. Also, when we scroll down, we can see that this data can be readily downloaded either as a comma separated values (.csv) file or as XML for re-use in their own database, spreadsheet or computer program.

Even though the information displayed in the view above is not labelled, anyone visiting this site with some knowledge of Canadian geography and politics can see what information pertains to the politicians’ names, the geographical area they come from and the political party they represent. This is because human beings are good at using context and prior knowledge to quickly categorise information.

Computers, on the other hand, cannot do this unless we provide them with more information. Fortunately, if we examine the source HTML code of this page, we can see that the information displayed is actually organised inside labelled elements:

(...)

<div class="ce-mip-mp-tile-container " id="mp-tile-person-id-89156">

<a class="ce-mip-mp-tile" href="/Members/en/ziad-aboultaif(89156)">

<div class="ce-mip-flex-tile">

<div class="ce-mip-mp-picture-container">

<img class="ce-mip-mp-picture visible-lg visible-md img-fluid" src="/Content/Parliamentarians/Images/OfficialMPPhotos/43/AboultaifZiad_CPC.jpg"

alt="Photo - Ziad Aboultaif - Click to open the Member of Parliament profile">

</div>

<div class="ce-mip-tile-text">

<div class="ce-mip-tile-top">

<div class="ce-mip-mp-honourable"></div>

<div class="ce-mip-mp-name">Ziad Aboultaif</div>

<div class="ce-mip-mp-party" style="border-color:#002395;">Conservative</div>

</div>

<div class="ce-mip-tile-bottom">

<div class="ce-mip-mp-constituency">Edmonton Manning</div>

<div class="ce-mip-mp-province">Alberta</div>

</div>

</div>

</div>

<div>

</div>

</a>

</div>

(...)

Thanks to these labels, we could relatively easily instruct a computer to look for all parliamentarians from Alberta and list their names and caucus information.

Structured vs unstructured data

When presented with information, human beings are good at quickly categorizing it and extracting the data that they are interested in. For example, when we look at a magazine rack, provided the titles are written in a script that we are able to read, we can rapidly figure out the titles of the magazines, the stories they contain, the language they are written in, etc. and we can probably also easily organize them by topic, recognize those that are aimed at children, or even whether they lean toward a particular end of the political spectrum. Computers have a much harder time making sense of such unstructured data unless we specifically tell them what elements data is made of, for example by adding labels such as this is the title of this magazine or this is a magazine about food. Data in which individual elements are separated and labelled is said to be structured.

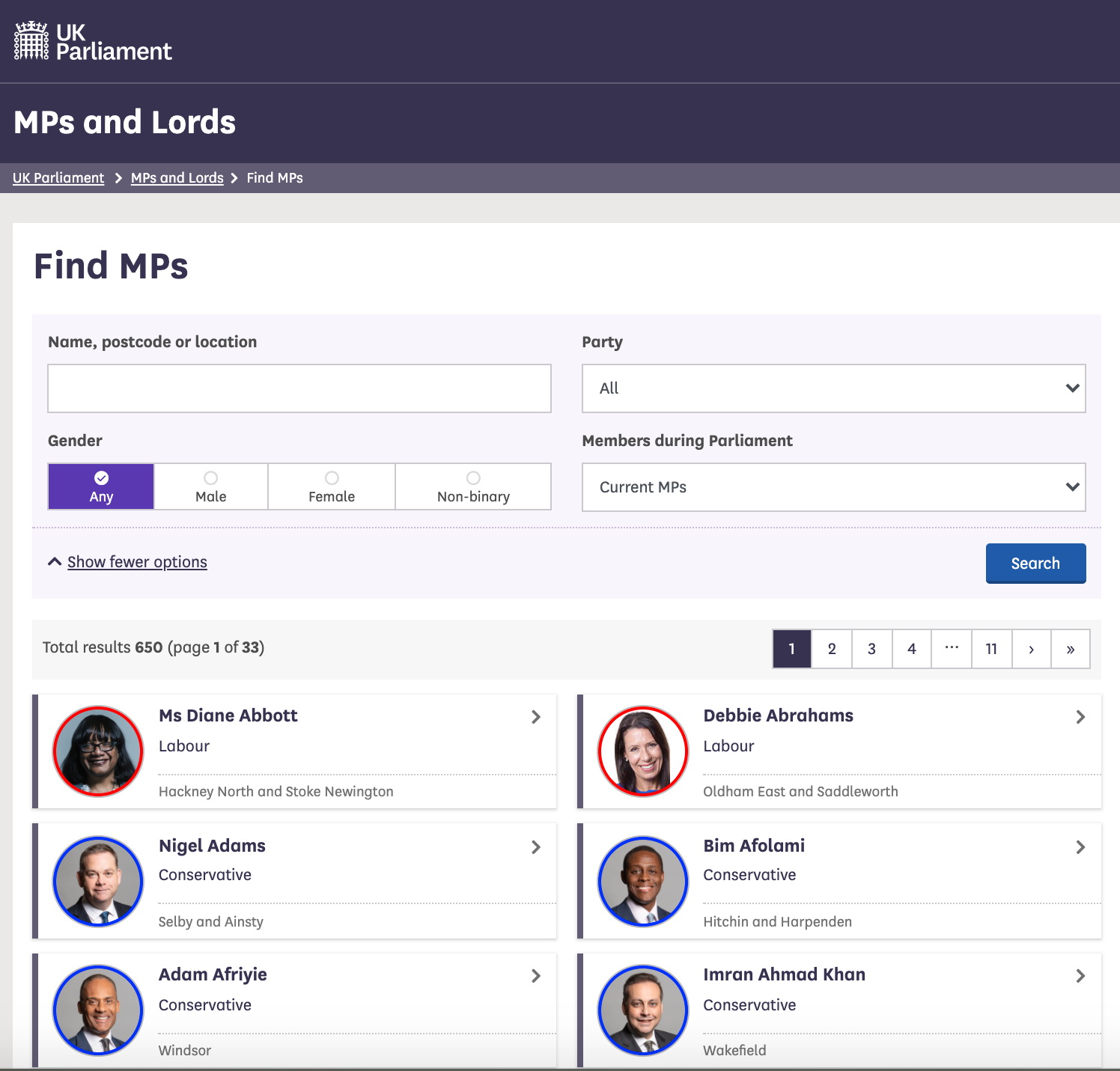

Let’s look now at the current list of members for the UK House of Commons.

This page also displays a list of names, political and geographical affiliation. There is a search box and

a filter option, but no obvious way to download this information and reuse it. Here is a portion of the code for this page:

(...)

<div class="card-list card-list-2-col">

<a class="card card-member" href="/member/172/contact">

<div class="card-inner">

<div class="content">

<div class="image-outer">

<div class="image"

aria-label="Image of Ms Diane Abbott"

style="background-image: url(https://members-api.parliament.uk/api/Members/172/Thumbnail); border-color: #ff0000;"></div>

</div>

<div class="primary-info">

Ms Diane Abbott

</div>

<div class="secondary-info">

Labour

</div>

</div>

<div class="info">

<div class="info-inner">

<div class="indicators-left">

<div class="indicator indicator-label">

Hackney North and Stoke Newington

</div>

</div>

<div class="clearfix"></div>

</div>

</div>

</div>

</a>

(...)

What if we wanted to download this data and, for example, compare it with the Canadian list of MPs to analyze gender representation, or the representation of political forces in the two groups? We could try copy-pasting the entire table into a spreadsheet or even manually copy-pasting the names and parties in another document, but this can quickly become impractical when faced with a large set of data. What if we wanted to collect this information for every country that has a parliamentary system?

Fortunately, there are tools to automate at least part of the process. This technique is called web scraping.

“Web scraping (web harvesting or web data extraction) is a computer software technique of extracting information from websites.” (Source: Wikipedia)

Web scraping typically targets one web site at a time to extract unstructured information and put it in a structured form for reuse.

In this lesson, we will continue exploring examples and follow techniques to extract the information they contain. But before we launch into web scraping proper, we need to look a bit closer at how information is organized within an HTML document and how to build queries to access a specific subset of that information, by understanding XPath.

References

Key Points

Humans are good at categorizing information, computers not so much.

Often, data on a web site is not properly structured, making its extraction difficult.

Web scraping is the process of automating the extraction of data from websites.